Latest Articles by Ed Stetzer

Next Generation Evangelism: 3 Approaches and a Way Forward

Though the practice of sharing their faith is much more challenging for young people in an increasingly secularized society, young Christians do evangelize. Taking a broad look, there seem to be three primary approaches that young people today seem to take towards the practice of evangelism.

Life Still Matters From Womb to Tomb

While the reversal of Roe v. Wade is certainly cause for celebration, pro-life advocates dare not think the cause has been won.

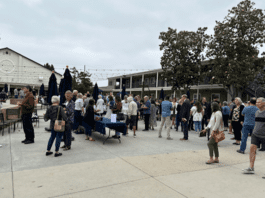

Church Journeys: Calvary Church Santa Ana: A Missions-Focused Church

Just after moving to California, I had the privilege of speaking at Calvary Church of Santa Ana, California. Calvary, planted in 1931, is an influential, historic church in Southern California.

Shadow Side of Mission: Cultivating Redemptive Communities

The urgency and significance of illuminating the shadow side of mission becomes evident when we consider the transformative power and ethical responsibilities associated with our missional calling.

Generational Shifts in Evangelism: Approaches for Today

We need to think innovatively about how to do evangelism in a 21st Century world. Here are some approaches that can help reach generations today.

‘A High and Holy Honor’—David Platt Shares Backstory, Growth of Secret Church

Dr. Ed Stetzer reached out to his friend David Platt, pastor of McLean Bible Church in Washington, D.C., and the founder of Radical, and asked him to share his heart and passion for Secret Church.

How Event Evangelism Helps People Share the Mission

One of the tools we can use for training people to get comfortable talking with others, inviting them to our weekend worship gatherings, and eventually sharing their faith, is through event evangelism.

Beyond the Decorations: Christmas Reveals the Person and Character of God

The Christmas story teaches us that God understands our own stories. During this season, we celebrate the coming of our Savior and King. The biblical story unfolds the need for a Savior and the promise of his coming.

So You Are Starting Seminary

Here are some thoughts for every student starting seminary this fall—with application for any stage of the seminary journey.

Shadow Side of Mission: Fostering Learning and Healing in Missions

An important link between our identity as God’s missional people and our participation in God’s mission is the kingdom ethic that gives shape to the shared live of God’s holy, yet imperfect people.