What will we do when AI goes to war?

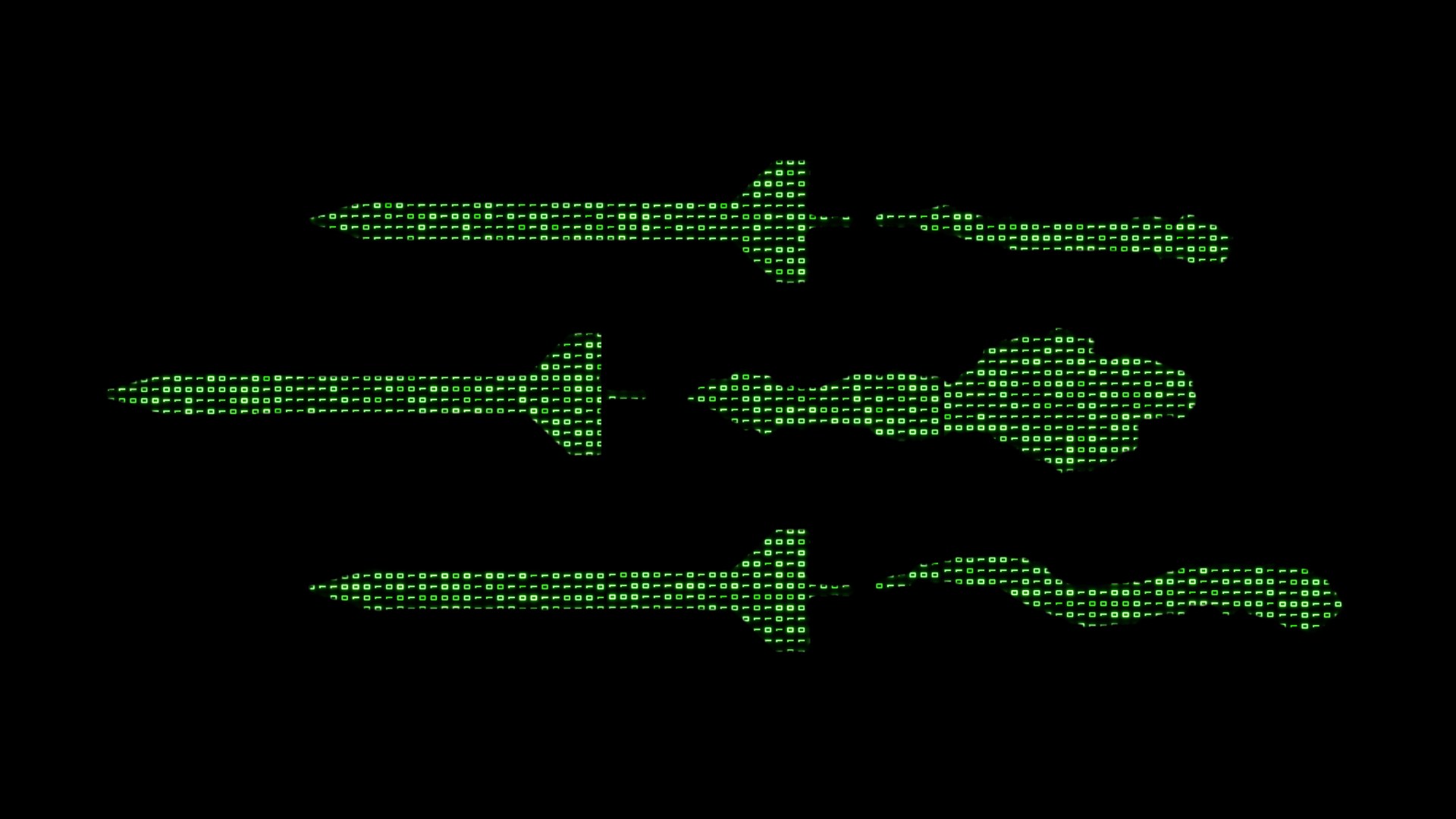

After all, a future of “killer robots” isn’t far off. We have unmanned aircraft already—the US-pioneered drone warfare in the Middle East in the post-9/11 era, and subsequent conflicts, including Russia’s invasion of Ukraine, have led other countries and combatants to get in on the action. The technology needed to make drones, drone swarms, and other weapons operate autonomously is in active development or, more likely, already exists. The question isn’t whether we’ll soon be able to bomb by algorithm, but whether we’ll judge it good and right.

That’s the future Lt. Gen. Richard G. Moore Jr., deputy chief of staff for plans and programs of the US Air Force, was considering when he made widely reported comments about ethics in AI warfare at a Hudson Institute event last week. While America’s adversaries may use AI unethically, Moore said, the United States would be constrained by a foundation of faith.

“Regardless of what your beliefs are, our society is a Judeo-Christian society, and we have a moral compass. Not everybody does,” he argued, “and there are those that are willing to go for the ends regardless of what means have to be employed.”

Mainstream headlines about Moore’s remarks were subtly but unmistakably skeptical. “‘Judeo-Christian’ roots will ensure U.S military AI is used ethically, general says,” said a representative example at The Washington Post, where Moore sent a statement clarifying that his aim was merely “to explain that the Air Force is not going to allow AI to take actions, nor are we going to take actions on information provided by AI unless we can ensure that the information is in accordance with our values.”

A write-up at the progressive blog Daily Kos, meanwhile, was open in its disapproval, calling Moore’s words an “only-Christians-can-be-moral statement.” It scoffed at the very notion of Christian ethical restraint in light of violent passages in the Old Testament and an Air Force training module on the ethics of nuclear weapons, suspended from use in 2011, in which the religious section was reportedly nicknamed the “Jesus loves nukes speech.”

The Kos article is sardonic and unfair—but it’s not wholly wrong. The fact is, the United States government has done terrible things in battle. However good our intentions, and however awful our adversaries, our country has done undeniable evil in war as a matter of official policy and unofficial practice alike.

Enabling Saudi coalition war crimes in Yemen, deliberately bombing a hospital in Iraq, conducting drone strikes against unknown targets, assassinating a 16-year-old American without trial, perpetrating the My Lai Massacre in Vietnam, nuking and indiscriminately firebombing whole cities of civilians, ransacking churches and mowing down civilians “coming toward the lines with a flag of truce” in the Philippines—the list is long and grim and could be much longer and grimmer. These are just a few examples that quickly come to mind. They’re also all examples from the past, including eras in which our society was more distinctively Judeo-Christian, to use Moore’s phrase, than it is today.

Given that history, count me among the skeptical who doubt that America’s cultural roots will provide enough of a moral compass to keep our government from succumbing to the temptation of unethical AI warfare. I wish our norms could be trusted as a safeguard here, but I don’t believe they can.

That’s not because of a deficiency in Christian ethics, as the Daily Kos post charges. I have every confidence that Jesus hates nukes, that he is the Prince of Peace (Isa. 9:6), that he was entirely serious and literal when he told us to love our enemies (Matt. 5:43–48), that he modeled that love to the death (Rom. 5:8), and that his return will mean an end of war and its crimes (Rev. 21:3–4).

But there is a deficiency in each of us, including the Pentagon decision-makers who’ll be responsible for handling military AI. The “line separating good and evil passes not through states, nor between classes, nor between political parties either—but right through every human heart—and through all human hearts,” Aleksandr Solzhenitsyn famously wrote in The Gulag Archipelago.

That’s as true of AI use as of anything else. If we aren’t careful, US use of AI in conflict won’t look much different from that of our adversaries. Leaders in Washington and foreign capitals alike will be tempted to unethical application of AI, justifying it as an ostensibly necessary evil.

Moore himself seems to understand that risk, as the full context of his comments makes clear. Part of the defense department’s budget for 2024, he said, is funding to study ethical AI use in warfare. We might decide there are decisions about employing lethal force that would be better made by an algorithm “that never gets hot and never gets tired and never gets hungry” rather than a young, weary soldier fearing for his life, Moore explained, but the ethical foundation must come first. This is a conversation, he reported, “being had at the very highest levels of the Department of Defense.”

The study Moore described is a good start, but even more important is what policymakers do with its results and how they do it. Executive or departmental orders are not good enough. As we’ve seen with drone-strike rules, these are nonbinding memoranda that can be changed, for better and worse, from one administration to the next. The result is ethical whiplash and more dead civilians.

What we need are laws, duly passed by Congress, signed by the president, and comparatively difficult to change. Lawmakers and the Pentagon should make it a priority to develop careful, formal constraints on what our government can do with artificial intelligence in the battlefield and beyond, including toothy consequences for those who stray outside those bounds.

It sounds almost silly to say so, but killer robots are coming, and we can’t rely on norms or executive-branch policy memos to keep them in check. We need clear AI ethics, and we need them inscribed in law.